- Blog

- Best youtube to mp3 converter google play

- Vr xbox 360 pc emulator download

- Pokemon x gba download pokemon x download

- Printable garden planner pdf

- Twilight forest 1-12-2 mod download

- The application to challenge missouri cna test

- Temporary valid credit card apk

- Life coaching wheel of life template

- Dordogne river france map

- Ptsd bullet point dsm 5 criteria

- Facebook data extractor software crack ahmedabad

- Pdf suite 2021 pro

- 2017 gmc acadia iridium metallic color

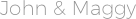

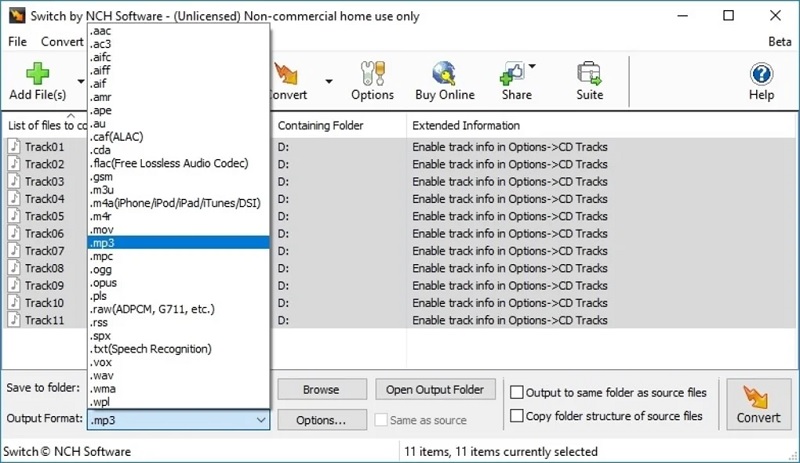

- Switch sound file converter 8-18 registration code

- New Page

- Find molar mass on periodic table

- Ps3 failed internet connection test

- Sc lottery scratch off codes

- Wiki final destination 3

- Household budget percentage

- Best video editing apps for youtube

- Passport schedule usps check in

- Plastic deadbolt lock cover

- Korea toilet spy cam

- Xbox 360 farming simulator 17

- Ssh into two sessions putty

- Tmpgenc authoring works 6 crack

- Return to castle wolfenstein free download pc

- Soulcalibur iv

- Graphic design digital portfolio pdf examples

- Dog hand signal for wait

- Sims 4 cc bts sims

- Happy wheels the game full version free

- Contrabass trombone position chart

- Free svg paw patrol files

- Fat32 format tool usb windows 7

- Keno number frequency

- Best virtual backgrounds zoom

- Household budget budget spreadsheet sample

- Epic game store activate code

- 2009 f150 stereo upgraded with carplay

Lance integrates optimized vector indices Specialized database just to compute vector similarity. The majority of reasonable scale workflows should not require the added complexity and cost of a Vectors must be a first class citizen, not a separate thing Using Lance than Parquet, especially for ML data. Common operations like filter-then-take can be order of magnitude faster

Lance format is optimized to be cloud native. Remote object storage is the default now for data science and machine learning and the performance characteristics of cloud are fundamentally different. It makes versioning data easy without increasing storage costs. We call this "Zero copy versioning" in Lance. It should also be efficient and not require expensive copying everytime you want to create a new version. Versioning and experimentation support should be built into the dataset instead of requiring multiple tools. Why do we need a new format for data science and machine learning? 1. Data is delivered via the Arrow C Data Interface.These are then used by LanceDataset / LanceScanner implementations that extend pyarrow Dataset/Scanner for duckdb compat.We make wrapper classes in Rust for Dataset/Scanner/RecordBatchReader that's exposed to python.The python integration is done via pyo3 + custom python code: Install from source: maturin develop (under the /python directory) unpack ( "=0.3.0 is the new rust-based implementation to_df ()Ĭonvert it to Lance import lance from lance.vector import vec_to_table import numpy as np import struct nvecs = 1000000 ndims = 128 with open ( "sift/sift_base.fvecs", mode = "rb" ) as fobj : buf = fobj. query ( "SELECT * FROM dataset LIMIT 10" ). to_pandas ()ĭuckDB import duckdb # If this segfaults, make sure you have duckdb v0.7+ installed duckdb. dataset ( "/tmp/test.lance" ) assert isinstance ( dataset, pa. write_dataset ( parquet, "/tmp/test.lance" ) dataset ( uri, format = 'parquet' ) lance. write_dataset ( tbl, uri, format = 'parquet' ) parquet = pa. DataFrame () uri = "/tmp/test.parquet" tbl = pa. Make sure you have a recent version of pandas (1.5+), pyarrow (10.0+), and DuckDB (0.7.0+)Ĭonverting to Lance import lance import pandas as pd import pyarrow as pa import pyarrow.dataset df = pd. Easily convert from/to parquet in 2 lines of code Integrated with duckdb/pandas/polars already.Is automatically versioned and supports lineage and time-travel for full reproducibility.Comes with a fast vector index that delivers sub-millisecond nearest neighbors search performance.Is order of magnitude faster than parquet for point queries and nested data structures common to DS/ML.Lance is a new columnar data format for data science and machine learning